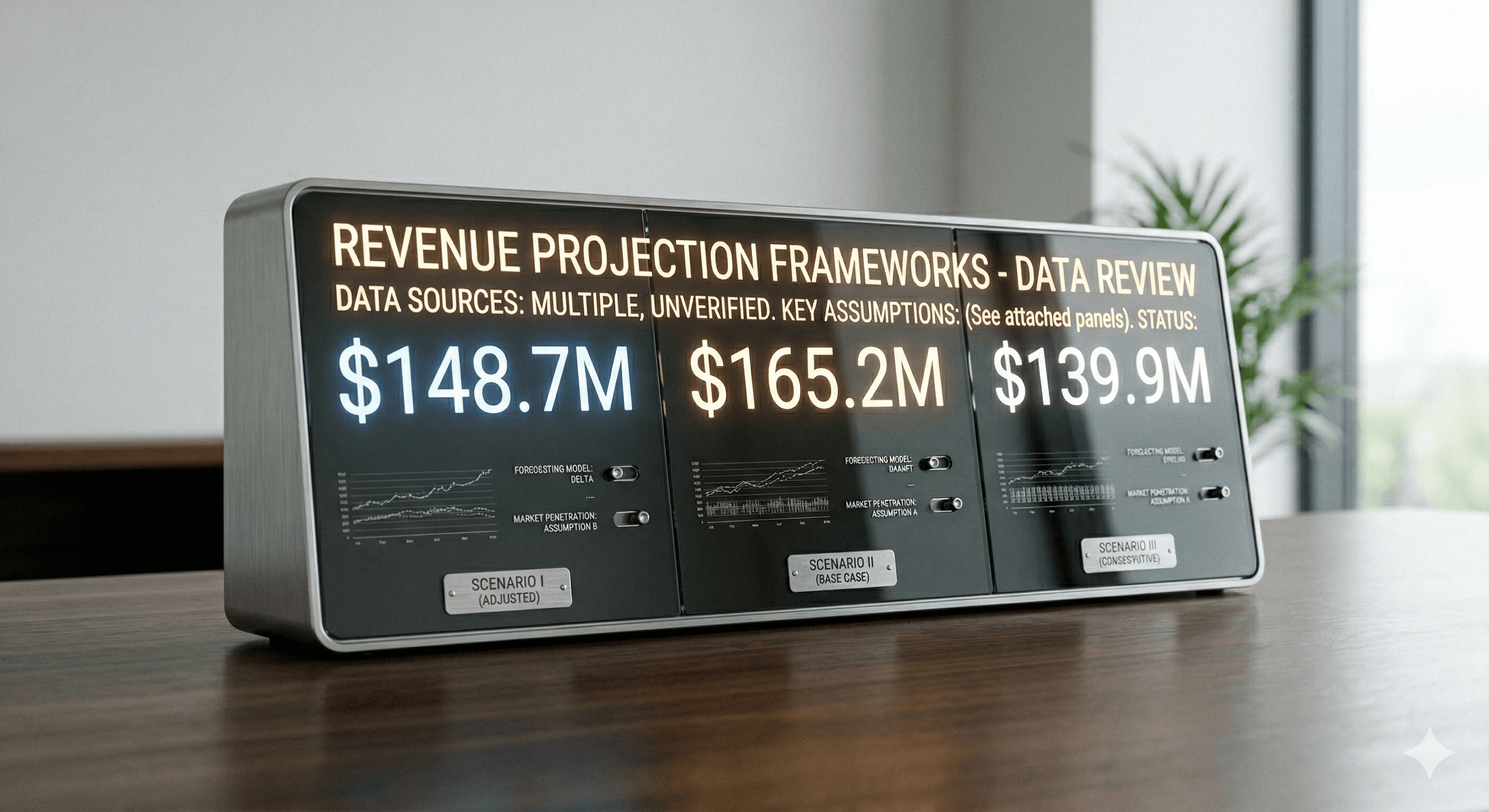

Three people pull “revenue” from the same data warehouse and get three different numbers. Here’s why.

Nobody lied. Nobody made a calculation error. Three smart people pulled the same metric from the same system and got three different answers—and now the meeting is about the data instead of the decision. If this has happened at your organization, you’ve experienced the last-mile problem of business intelligence: getting data out of a warehouse and into the hands of decision-makers in a way they can actually trust.

This is genuinely harder than it sounds. The tools have gotten better—you can now hook a data warehouse up to a slick BI tool and give a non-technical executive the ability to run their own reports without writing a line of SQL. (And god forbid, even an MCP server so they can use an LLM to run queries...) But the tools don’t solve the underlying organizational and governance problems that make data trustworthy. Those require a different kind of work.

The naming problem: your data warehouse is a graveyard of good intentions

A data warehouse that’s been in use for more than a year or two is almost always full of tables built by different teams, at different times, for different reasons, with different naming conventions. Nobody designed it that way on purpose—it just grew.

The result is that there are often 8 or 10 or 15 variations of “revenue” in a mature warehouse. “Gross_revenue,” “net_revenue,” “total_revenue,” “revenue_usd,” “amount_paid.” Some of these are subtly different calculations. Some are the same calculation with different names from different eras. Without documentation (which often doesn’t exist, or exists but hasn’t been updated in two years), an analyst who is new to your data environment will pick the one that sounds right and proceed. And they’ll often pick a different one than the analyst who built the dashboard everyone has been using for six months. This is compounded when you use a tool like visual studio where these are all presented in a drop down with no context for the curious person to consider.

That’s your meeting with three numbers. Nobody did anything wrong. The system just doesn’t have a clear answer to “which revenue field is the real one?”

What is a canonical field, and why doesn’t your organization have one?

A canonical field is the official, agreed-upon version of a metric. “Revenue” means this field, calculated this way, from this table. Not “depends on the context.” Not “usually this one.” This one. This is often referred to as "the single source of truth."

Most organizations don’t have canonical fields because nobody ever sat down and made the organization-wide agreement that canonicalization requires. It’s not a technical problem—the technology to enforce canonical field definitions exists and is mature. It’s a governance problem. It requires the business and the data team to agree on what things mean, write that down somewhere authoritative, and then actually enforce it in the tools people use.

A data dictionary is usually the answer organizations reach for here, and it’s a reasonable start. The problem is that data dictionaries have a shelf life. They get created as part of a project, published somewhere, and then nobody updates them when the data changes. Six months later they’re partially wrong and everybody knows it, so nobody trusts them, so they don’t refer to them, so the problem persists. If you want a data dictionary, you have to staff a data monk to look after this sacred text.

The sustainable version of this is to create canonical definitions that you bake into the semantic layer of your BI tooling, where they get enforced automatically rather than relying on people to look up the right answer in a document they don’t quite trust. This often intersects with a key data warehouse architecting concept called "snowflaking" where intentional decisions are made on which field, from which table, is wired into permanent executive reporting tables. (Often called "gold" tables because of their value to the organization.)

The data dictionary is a reasonable start. But a data dictionary that nobody trusts is just documentation of a problem, not a solution to it.

Your BI tool is only as good as what’s underneath it

Business intelligence tools—Looker, Tableau, Power BI, Metabase, and the rest—do genuinely lower the barrier to data access. But they’re frequently oversold as the solution to the analytics problem, when really they’re a presentation layer on top of a more fundamental problem. Having an easier way to report on chaos and uncertainty isn't a path towards reliable and trustworthy decisions.

Every BI tool needs someone(s) to define the semantic layer: what does “revenue” mean in this tool, how is “campaign performance” calculated, what dimensions are available for slicing. If the semantic layer is built on ambiguous field names and undocumented assumptions—which it usually is, because it gets built by whoever is implementing the BI tool and not by someone who has done a full audit of the underlying data—then the BI tool will faithfully surface those ambiguities to every user who touches it.

The tool will let someone pull both “gross_revenue” and “revenue_usd” and show them side by side without mentioning that one excludes refunds and the other doesn’t. That’s not the tool’s fault. That’s a semantic layer that wasn’t built carefully enough. Behind that technical failure, is a governance failure.

Access and security: the two failure modes

Not everyone should see everything in a data warehouse. Some fields contain personally identifiable information. Some tables have compensation data or board-level financials. Some data is commercially sensitive.

Managing this poorly tends to produce one of two failure modes. Either access is too open—everyone can see everything, which is a compliance and confidentiality problem—or it’s locked down so aggressively that analysts spend half their time waiting for permissions to be granted, which is a productivity problem. (I’ve seen the second failure mode cause more organizational damage than the first, just because of how much friction it introduces into daily work.)

Getting this right requires a permission model that’s actually mapped to how the organization works: what each team legitimately needs, what they shouldn’t have, and a process for handling the access requests that will inevitably fall outside whatever you set up initially. It also takes executive strength to ensure CYA isn't the defining logic of your permissions model.

The query cost problem that will surprise you

One thing that surprises a lot of executives the first time they see it: in a serverless data warehouse like BigQuery, Snowflake, or Redshift Serverless, you pay based on how much data your queries scan, and how much intersecting & remixing those queries ask of the stored data. A well-constructed query on a properly partitioned table might cost fractions of a cent. A poorly constructed query that scans your entire five-year history of raw ad data, run repeatedly by a dashboard that refreshes every ten minutes, can generate a genuinely surprising cloud bill.

I’ve seen this catch organizations completely off guard—a bill that was $400/month for a year suddenly spikes to $4,000 because someone built a new report without thinking about what was happening underneath it. The tricky part is that whoever built the report had no idea. They were just using the BI tool. The query cost problem isn’t visible at the BI layer unless someone has deliberately built in visibility. To solve this you need to see you warehouse as a factory, not a data landfill. Processing raw data into summary data, at the right level and frequency is essential for cost controls.

Query review—understanding what queries are actually running, how expensive they are, and whether the cost is proportionate to the value—is part of good data governance. It’s not glamorous, but it’s the kind of thing that saves you from a very awkward conversation with your CFO. It's also the activity that identifies when you need to move from raw data queries to querying scheduled pre-processed data.

What you’re actually trying to build

All of this—the naming conventions, the canonical fields, the semantic layer, the access controls, the query cost management—is in service of one thing: a data environment where your leadership team trusts the numbers they see and acts on them confidently.

That trust is fragile. It takes one too many meetings where three people have three different answers to make a leadership team skeptical of the entire analytics function. Rebuilding that trust once it’s broken is a lot harder than building it right in the first place.

The good news is that the path forward is almost always clearer from the outside than it looks from inside. These problems are diagnosable. The solutions are almost always practical. It just requires someone who knows what to look for and the organizational will to fix it.

Does your team have a shared, agreed-upon definition of what your key metrics mean and where they come from? That’s usually the first question I ask when I start working with an organization, and the answer tells me a lot about what we’re going to find.

If your analytics function is producing numbers that people argue about instead of act on, I’d love to talk.

Chat with the author

If you'd like to make a connection and perhaps collaborate on something:I'd love to talk with you!No matter if you want to build your professional network,or think there might be a great opportunity to to work together or partner.