This Is the Cheapest AI Will Ever Be

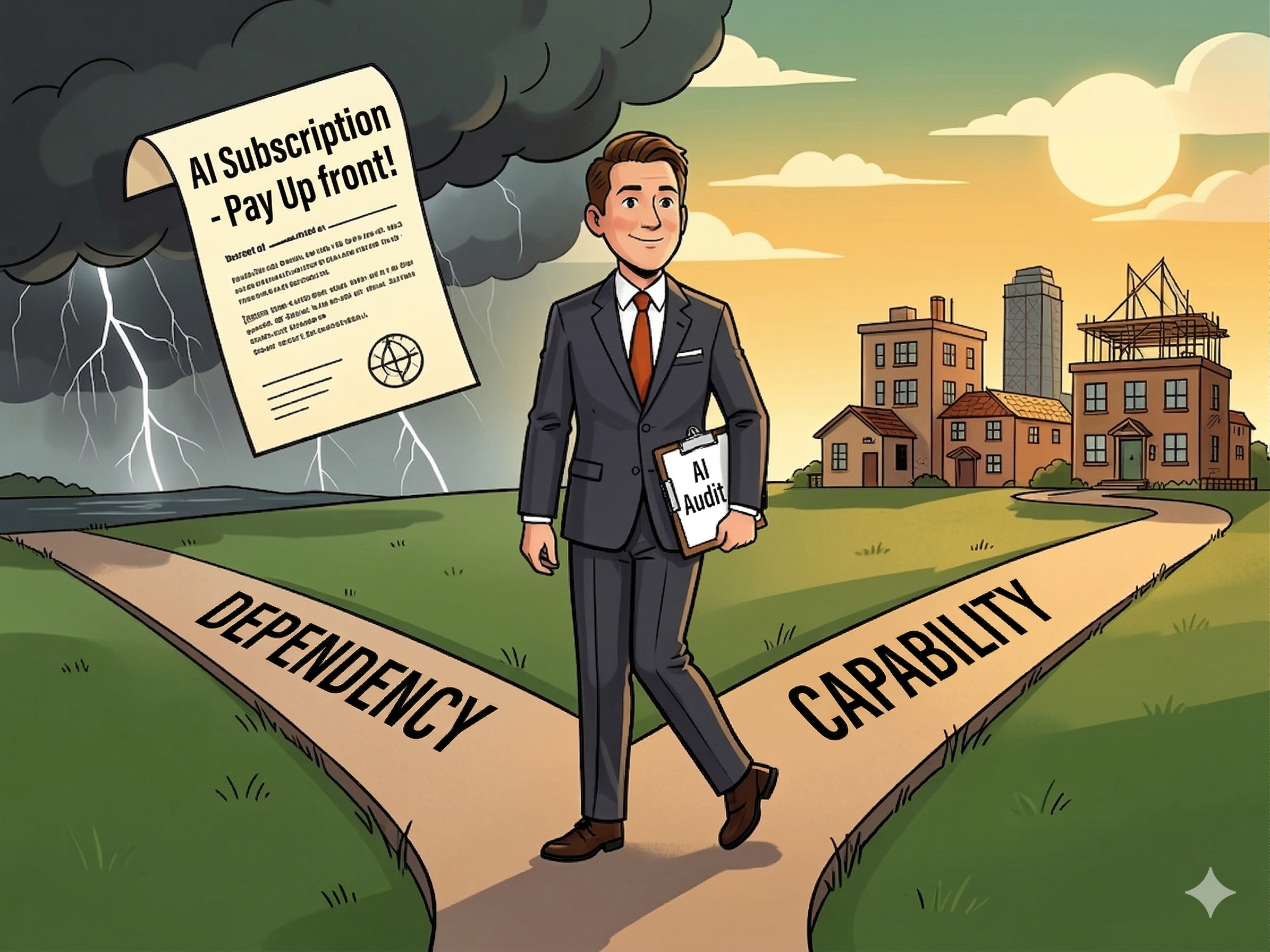

AI right now is your $1.50 Uber ride.

In 2014, Uber was losing money on every trip. Rides that cost $8 to deliver were going out the door for $1.50. They weren't in the transportation business. They were in the dependency business — using subsidized pricing to rewire behavior until hailing a cab felt absurd and their competitors with shallower pockets broke.

Today's AI companies are running the same playbook. They're spending hundreds of millions to train frontier models and offering you API access at prices that don't cover the cost. The goal isn't your monthly invoice. It's the workflows you build on top of it. The integrations. The automations. The internal tools your team can't live without. At the same time every business will be incentivized to hollow out their internal expertise, their talent development pipelines. Soon AI won't be a luxury, it will be a necessity.

Thus the real question isn't whether you enjoy the $1.50 ride. The key question is if you're business makes sense when what you've built can survive and be profitable with an $11.50 one.

I'm a Martech architect and executive advisor. I've spent years helping operators translate technical complexity into decisions they can actually act on. I've watched businesses get burned by dependency cycles — not because they chose the wrong technology, but because they built on the assumption that current pricing was permanent. That mistake is about to repeat itself in AI.

Here's what I think most operators are missing — and what to do about it now, while the window is still open.

The Subsidy Is Real. So Is the Exit.

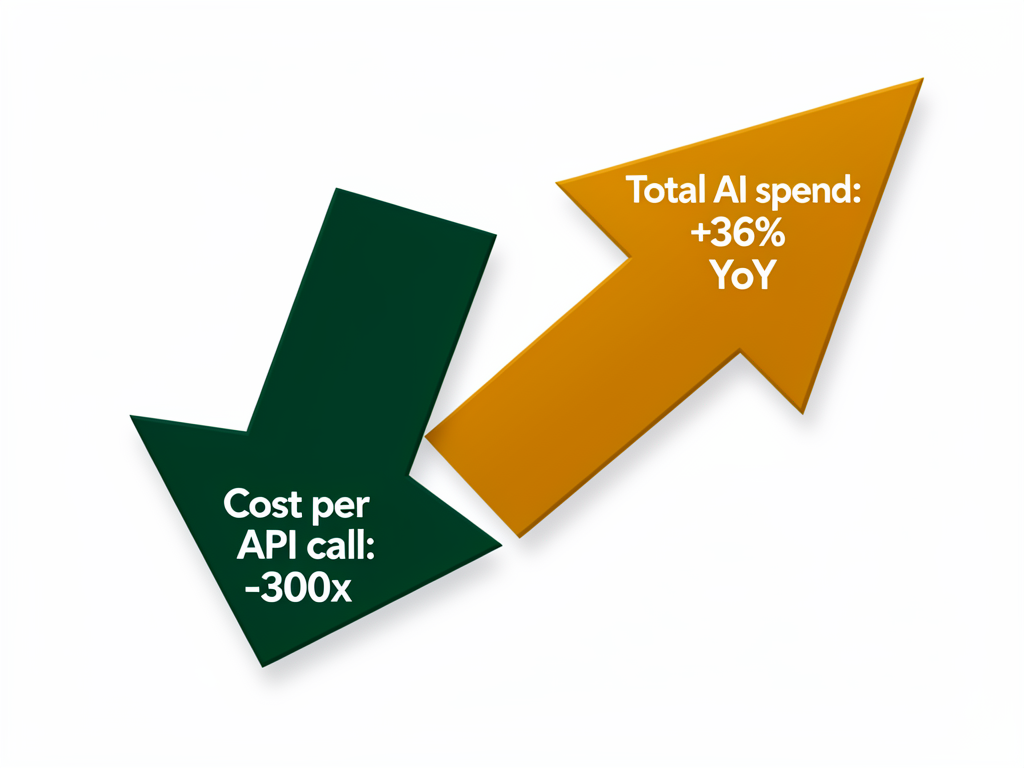

The cost data is genuinely remarkable. From 2023 to 2026, AI API pricing dropped approximately 300x — GPT-4's launch price was around $30 per million tokens; comparable capability now runs under $0.10.¹ That's not an incremental improvement. That's a structural price war between OpenAI, Anthropic, Google, and DeepSeek, each trying to lock developers and enterprises into their ecosystem before the land grab ends.

At the same time, enterprise AI spend is going the other direction. The average enterprise monthly AI spend rose 36% in a single year — from $63K in 2024 to $85.5K in 2025, with nearly half of companies now spending over $100K/month.² The cost per unit is collapsing. Total spend is climbing. Both things are true simultaneously.

Here's the structural truth nobody is saying out loud: the floor drops faster than the ceiling.³ Price compression has been most aggressive at the commodity tier — summarization, classification, simple drafting tasks. Frontier AI — the kind that does high-stakes reasoning, nuanced judgment, and genuinely differentiated work — is not commodity. It costs real money to train. Frontier model training costs are growing 2–3x annually.⁴ Premium capability will always carry premium pricing.

The canary is already singing. Microsoft is raising Microsoft 365 prices 13–17% in mid-2026, explicitly citing AI capability expansion as the reason.⁵ This is a large, established company monetizing dependency it already built. Other vendors will follow. If you're paying for AI-assisted tools today, the bill you're running is not the bill you'll be running in 18 months.

The subsidy era isn't ending tomorrow. But it will end. And the businesses that treat current AI pricing as a permanent condition are walking straight into the trap.

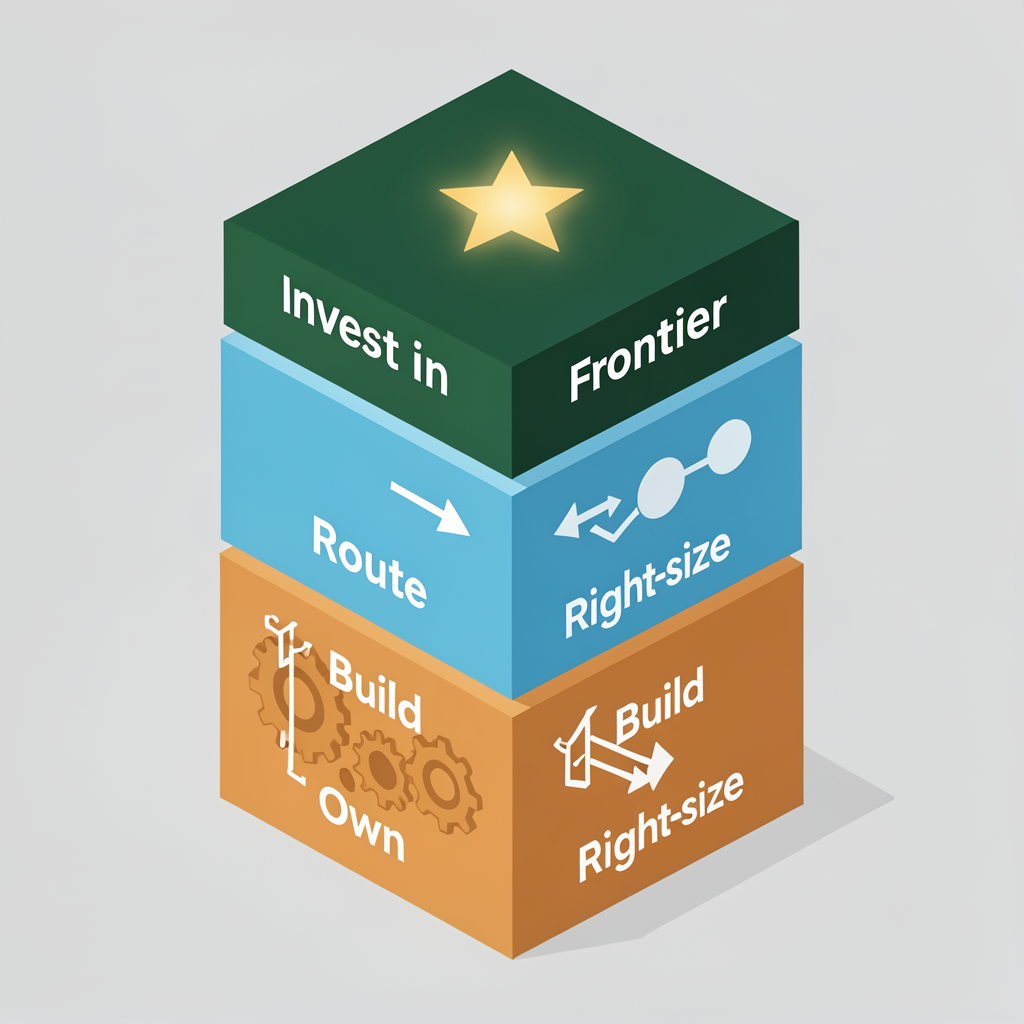

The Three-Layer Architecture Every Operator Should Know

The response to this isn't panic and it isn't denial. It's a simple architectural discipline — one you can apply without a team of ML engineers.

Every AI task your business runs today belongs in one of three tiers. Most operators haven't sorted their workloads this way. The ones who do will find themselves largely insulated from what's coming.

Layer 1: Build It Once, Run It Forever

The most durable AI play isn't using AI — it's using AI to build things you then own and run cheaply.

When you use a frontier model to generate a data pipeline, a pricing calculator, an onboarding flow, a contract parser — and that output becomes code running in your own infrastructure — you've converted an ongoing API dependency into a one-time cost. You consumed the intelligence once. Now you own the artifact.

This is different from using AI to draft an email or summarize a meeting. That's consumption. Build-and-own is production. The distinction matters enormously when prices normalize.

The practical question to ask about any AI workflow: "Is the output a thing I keep and reuse, or a thing I consume and repeat?" The more of your AI usage you can shift toward the former, the less exposed you are to future pricing.

Layer 2: Route work to the Cheapest Capable Model, Insource what you can

Not every task needs the smartest AI in the room.

A hybrid model-routing approach — using frontier AI for complex tasks and lighter models for everything else — delivered a 39% cost reduction and 32–38% latency improvement in documented enterprise implementations.⁶ The quality trade-off on routine tasks is minimal. Open-source models like Llama 4 and Mistral now achieve 85–90% of frontier performance on many standard tasks, and self-hosting becomes cost-effective at approximately 2 million tokens per day.⁷

You don't need to build your own routing infrastructure to apply this principle. Start by classifying your current AI usage into two buckets: tasks where the output quality is genuinely business-critical versus tasks where "good enough" is actually good enough. Anything in the second bucket is a candidate for a cheaper model.

This isn't a technical decision — it's a classification exercise. You don't need an engineer to tell you that your weekly report summarization doesn't require GPT-5. That being said, there are tremendous cost savings to be had by planning to in-source your lower level models and hosting your own cheap capacity for mundane tasks.

Layer 3: Invest Intentionally in Frontier

Here's the flip side: there are tasks where frontier AI is genuinely the right tool, and you should be willing to pay for them.

High-stakes reasoning. Strategic analysis. Nuanced customer communications. Creative work where quality differentiates your output. Complex multi-step workflows where errors compound. These are places where the premium is earned.

The mistake isn't spending frontier prices. The mistake is spending frontier prices on tasks that don't require frontier intelligence. IDC projects that by 2028, 70% of top AI-driven enterprises will use advanced architectures to dynamically route tasks across model tiers.⁸ The companies building that muscle now — while it's cheap to experiment — will be the ones who manage through pricing normalization without disruption.

How to Do This Without an Engineering Team

Most of the people reading this aren't writing code or managing infrastructure. That's fine. The architecture decisions that protect your cost structure don't require a technical co-founder. They require a strategic conversation — and the person who should drive it is you.

Here's how to start this week:

1. Audit your AI spend by workflow. List every AI tool, API, and AI-assisted product you're paying for. For each one, ask: What does this produce? Is it something we reuse, or something we consume repeatedly? Is this a frontier task or a commodity task?

2. Classify everything into the three layers. Build-and-own. Route to cheaper. Invest in frontier. Most workflows will fall clearly into one bucket once you think about them explicitly.

3. Ask your vendors where their pricing is going. Not as an accusation — as a planning conversation. Any vendor who can't answer that question is one you should think carefully about deepening your dependency on.

4. Identify one workflow you could convert from API consumption to owned software. Just one. Use AI to generate the code. Run it yourself. You'll learn more from one concrete build-and-own project than from any amount of planning.

The businesses that thrived after Uber's price normalization were the ones who used the cheap-ride window to build their own logistics muscle — delivery networks, routing software, supply chain integrations. They used the window. They didn't just enjoy the service.

The AI window is open right now. Costs are low enough to experiment. Switching costs are still manageable. The time to make intentional architecture decisions is before you're locked in, not after.

Where Are You?

Take 30 minutes this week. Map your current AI spend against the three layers. Find out where you're building dependency and where you're building capability.

One will age well. The other won't. Want to design your infrastructure to manage AI costs in the future? Let's chat!

Quick Reference: The Three-Layer AI Architecture

Layer | What It Means | When to Use It | Cost Profile |

|---|---|---|---|

Build & Own | Use AI to generate code, automations, or systems you then run yourself | Any repeatable workflow with a discrete, reusable output | One-time AI cost; low ongoing run cost |

Route & Right-size | Use lighter/open-source models for high-volume, routine tasks. Insource and host your own AI models for lower level tasks | Summarization, classification, drafting, low-stakes outputs | Fraction of frontier pricing; self-host above ~2M tokens/day |

Invest in Frontier | Use the best available model for work where quality differentiates | High-stakes reasoning, strategy, complex judgment calls | Premium pricing; worth it for the right tasks |

Nate is the founder of PathPractical — executive advisory for technology roadmapping, planning, and architecture. He helps business leaders translate technical complexity into decisions they can act on.

References

1. AI API costs dropped ~300x from 2023–2026 — AI Price Index, TokenCost, 2026

2. Enterprise AI spend rose 36% year-over-year — State of AI Costs, CloudZero, 2025

3. "The price of 'good enough' dropped much faster than frontier models" — AI Price Index Analysis, TokenCost, 2026

4. Frontier model training costs growing 2–3x annually — The Rising Costs of Training Frontier AI Models, arXiv

5. Microsoft raising M365 prices 13–17% citing AI expansion — Microsoft AI Pricing Analysis, Monetizely

6. 39% cost reduction, 32–38% latency improvement from intelligent LLM routing — Intelligent LLM Routing in Enterprise AI, Requesty

7. Open-source models at 85–90% of frontier; self-hosting threshold ~2M tokens/day — Open Source vs Closed LLMs: The 2026 Decision Framework

8. IDC: 70% of top AI enterprises will use dynamic model routing by 2028 — The Future of AI Is Model Routing, IDC

9. 78% of IT leaders report unexpected charges from AI/consumption pricing — State of AI Costs, CloudZero, 2025

10. AI infrastructure and inference workloads as 65% of compute spend — AI Infrastructure Report, Deloitte, 2026

Chat with the author

If you'd like to make a connection and perhaps collaborate on something:I'd love to talk with you!No matter if you want to build your professional network,or think there might be a great opportunity to to work together or partner.